Welcome!

I'm Maya, an AI researcher interested in the application of Machine Learning to unsolved problems in Science, as well as in a broad range of topics such as Self-Organisation, Collective Learning and Open-Endedness.

I'm currently working on AI for automated discovery in Science as a postdoctoral researcher at Syensqo and LoF-CNRS, where I develop curiosity-driven exploration methods for the discovery of novel chemical formulations. Before that I obtained my Ph.D. in Machine Learning under the supervision of Pierre-Yves Oudeyer and Clément Moulin-Frier at the FLOWERS team at Inria Bordeaux; together with the Poietis biotech company. During my Ph.D, I also worked with Dr. Michael Levin and his team at the Levin Lab at Tufts University.

Previously, I have been at University College of London where I completed my Master of Science (MSc) in computer vision and at Télécom Paristech where I did my Master of Engineering (MEng). I also spent a year as an AI research intern at Siemens Healthineers, Princeton N.J., working on deep learning and reinforcement learning algorithms for healthcare. My extended CV can be found here.

News:

- 09/2024: Very grateful to have received a best thesis award for my work, thank you CSS France!

- 07/2024: Excited to start exploring applications of curiosity-driven AI for chemistry at the LoF-CNRS lab 🧪

- 04/2024: New simple-foc-assistant tutorial to learn how to build a SimpleFOC AI Assistant with RAG

- 03/2024: New sketch-transformer tutorial to train transformers+MDN to generate human-like sketches

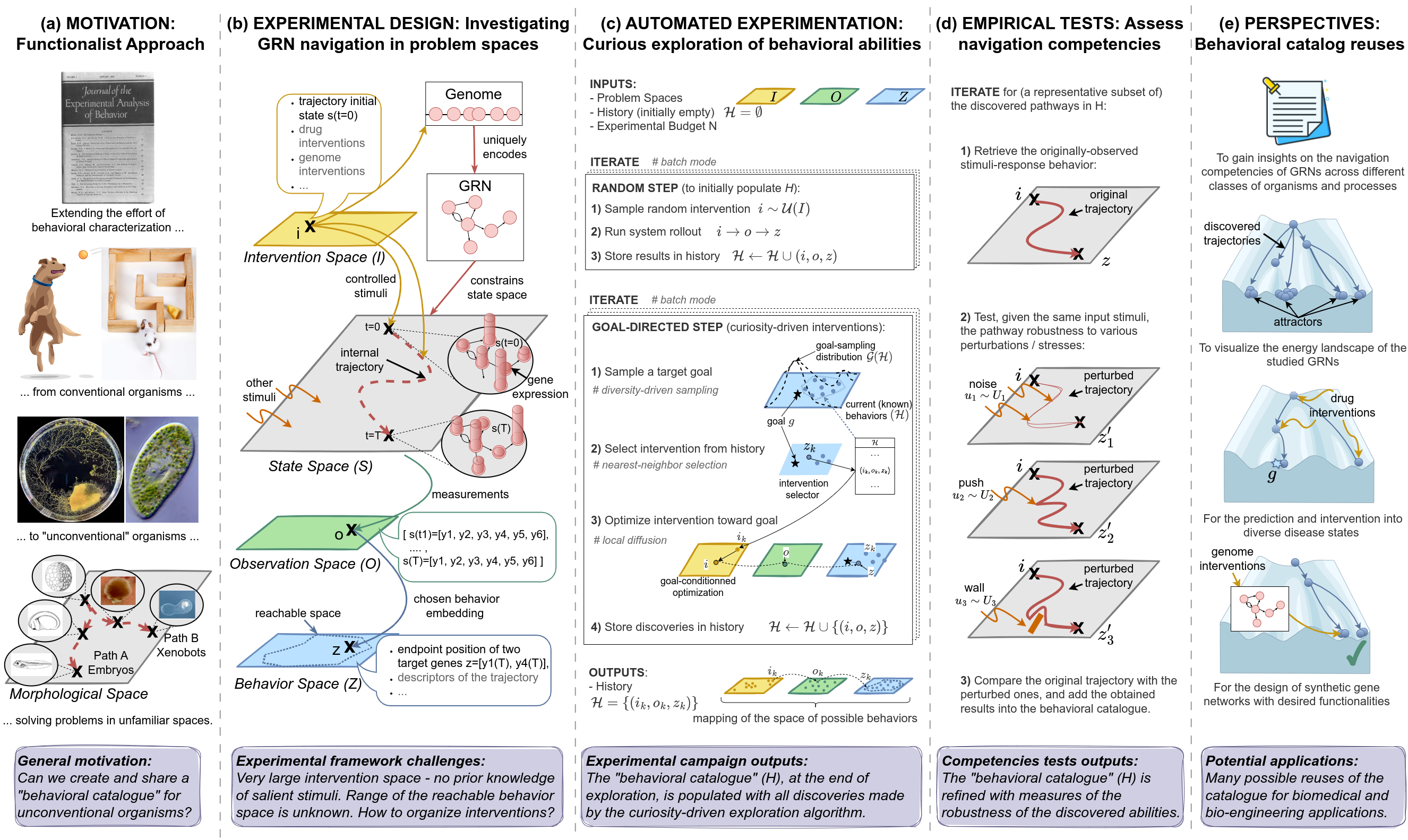

- 02/2024: Our paper about AI-driven discovery of GRN behaviors got accepted into the eLife journal

- 12/2023: Co-organized the Agent Learning in Open-Endedness Workshop held at NeurIPS 2023 🌱

- 11/2023: Defended my thesis "Curiosity-driven AI for Science: Automated Discovery of Self-Organized Structures". Thank you to my amazing jury Alan Aspuru-Guzik, Sebastian Risi, Melanie Mitchell, Jeff Clune, and Nicolas Brodu; and supervisors Pierre-Yves Oudeyer, Clément Moulin-Frier and Marc Nicodème 🙏

- 09/2023: New tutorial serie on how to use diversity search to explore behaviors of biological networks

- 07/2023: Our Flow Lenia paper won the best paper award at ALife 2023 conference 👾

Thesis:

Publications:

SBMLtoODEjax: Efficient Simulation and Optimization of Biological Network Models in JAX

AI for Science Workshop at NeurIPS 2023 (Poster)

| abstract | webpage | pdf | publication | code | documentation | tutorials |

Flow-Lenia: Towards open-ended evolution in cellular automata through mass conservation and parameter localization

ALIFE 2023 (Best Paper Award)

| abstract | webpage | pdf | publication | oral talk | code |

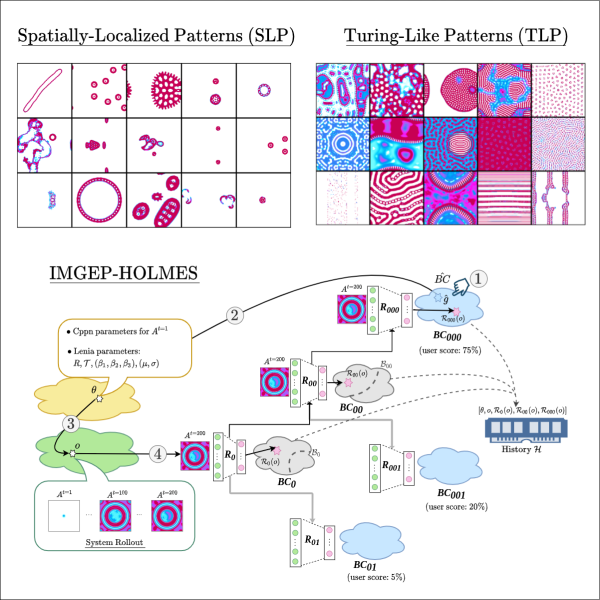

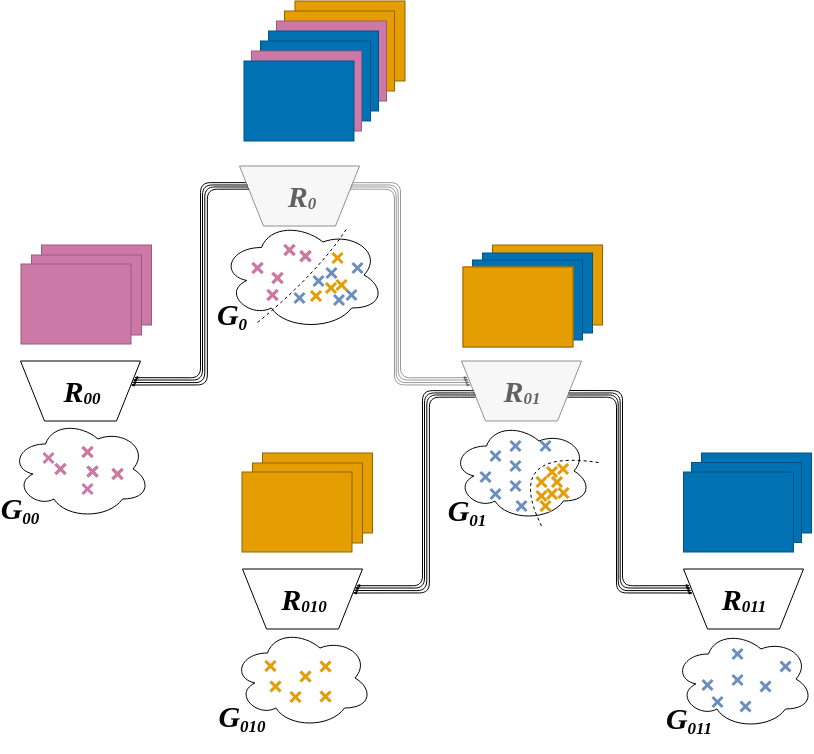

Hierarchically Organized Latent Modules for Exploratory Search in Morphogenetic Systems

NeurIPS 2020 (Oral presentation, top 1%)

| abstract | webpage | pdf | publication | poster | oral talk | code |

Progressive Growing of Self-Organized Hierarchical Representations for Exploration

Be-TR RL Workshop at ICLR 2020 (Poster)

| abstract | pdf | publication | oral talk |

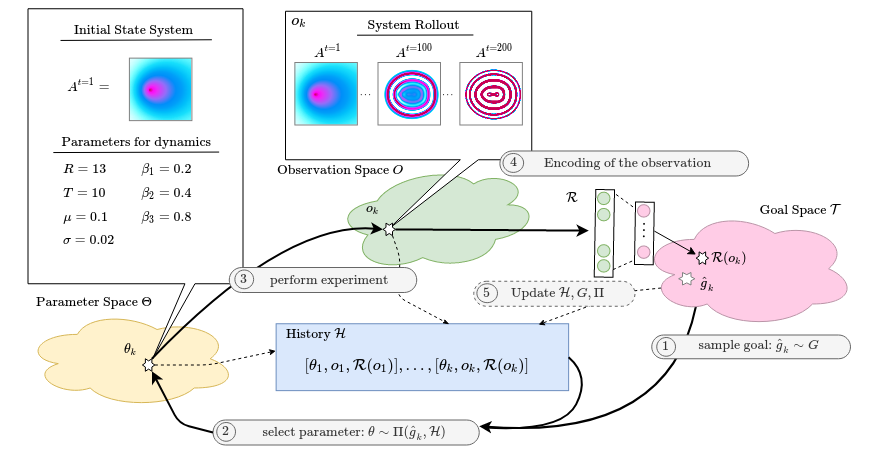

Intrinsically Motivated Exploration for Automated Discovery of Patterns in Morphogenetic Systems

ICLR 2020 (Oral presentation, top 2%)

| abstract | webpage | pdf | publication | oral talk | blog | code |

Nonlinear Adaptively Learned Optimization for Object Localization in 3D Medical Images

DLMIA workshop at MICCAI 2018, also abstract at MED-NEURIPS 2018 (Poster)

US Patent: https://patents.google.com/patent/US20190378291A1/en

| abstract | pdf | publication | poster |

Blog posts / Tutorials:

Building a SimpleFOC AI Assistant with RAG

Generate Human-like Sketches with Transformers

Diversity Search to Explore Biological Networks (tuto 2)

Diversity Search to Explore Biological Networks (tuto 1)

How to use SBMLtoODEjax (short tutorial series)

A conversation with Nicholas Guttenberg about my work

Learning Sensorimotor Agency in Cellular Automata

Meta-Diversity Search in Minecraft

Automated discovery in a continuous Game of Life